April 19, 2026

The Algorithmic Compass: Navigating Bias and Fairness in AI

How to think about AI bias as a structural problem rather than a moral failing, and what practical fairness looks like in deployed systems.

8 min read

AI bias is not a bug that someone introduced through carelessness. It is a structural property of systems trained on data generated by a biased world. This distinction matters because it determines whether you approach the problem as a quality control issue (find the bias, remove it, ship the clean system) or as an ongoing navigation challenge (understand where the biases are, decide which ones are acceptable, and monitor continuously for drift).

The first approach is simpler and more satisfying. It is also wrong. Bias in AI systems cannot be fully removed because the data that trains the systems reflects real-world patterns that include historical discrimination, uneven representation, and structural inequalities. You can mitigate specific biases. You can shift the distribution. You cannot achieve a bias-free system any more than you can achieve a bias-free society. What you can achieve is a system where the remaining biases are understood, documented, and accepted or compensated for by deliberate design choices.

What Bias Actually Is

The word "bias" carries moral weight in everyday language. But in statistical and machine learning contexts, bias has a precise technical meaning: a systematic deviation from a desired standard. A biased model is one that consistently produces outputs that deviate from the target in a particular direction.

This definition is important because it separates the observation (the model's outputs deviate) from the judgment (the deviation is wrong). Whether a particular bias matters depends on the context, the stakes, and the alternatives. A hiring model that under-recommends candidates from certain demographics is biased in a way that matters enormously. A weather prediction model that slightly overestimates afternoon temperatures is biased in a way that rarely matters.

The discourse around AI bias often collapses these two cases into a single moral category, which makes it difficult to have practical conversations about tradeoffs. Not all biases are equal. Not all biases are harmful. And addressing one bias sometimes introduces another. Navigating these tradeoffs requires the kind of contextual judgment that appreciative mental models are designed to support.

The Measurement Problem

You cannot fix what you cannot measure, and measuring fairness in AI systems is harder than most people realize. There are multiple mathematically valid definitions of fairness, and they are mutually incompatible. This is not an implementation problem. It is a mathematical theorem, proven by Chouldechova (2017) and Kleinberg, Mullainathan, and Raghavan (2016).

Consider a model that predicts loan default risk. You could define fairness as: the model should have the same approval rate across demographic groups (demographic parity). Or: the model should have the same false positive rate across groups (equal opportunity). Or: the model should have the same positive predictive value across groups (predictive parity). These sound compatible, but except in trivial cases, you cannot satisfy all three simultaneously.

This means every deployed AI system involves a choice about which definition of fairness to prioritize. That choice is not technical. It is a value judgment that requires understanding the specific context, the specific stakes, and the specific communities affected. A lending model and a criminal justice model might reasonably use different fairness definitions even though both involve consequential predictions about individuals.

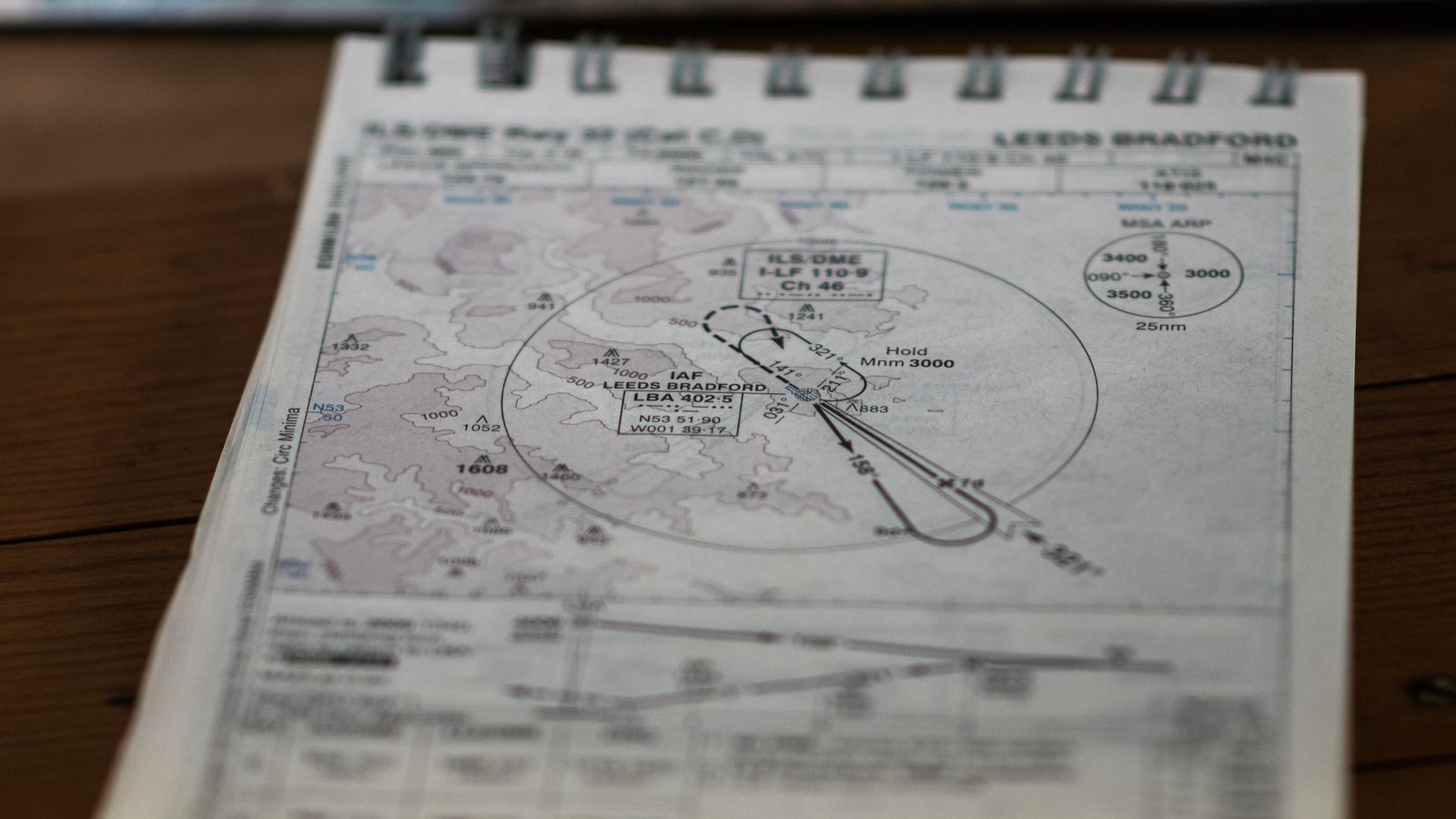

Navigating Without a Map

The metaphor of a compass is deliberate. A compass does not tell you where to go. It tells you which direction you are facing. Similarly, a fairness framework does not tell you what the right decision is. It tells you which tradeoffs you are making.

The practical approach to AI fairness in 2026 involves several components that together function as a compass for navigating bias:

Audit before deployment. Test the system's outputs across relevant demographic categories before putting it into production. Not as a checkbox exercise, but as a genuine investigation into where the model's behavior varies by group and whether those variations are acceptable given the context.

Document the tradeoffs. Every fairness choice involves a tradeoff. Make the tradeoff explicit. Write it down. If you chose to optimize for equal approval rates at the cost of higher default rates in some groups, say so. If you chose to optimize for predictive accuracy at the cost of unequal approval rates, say so. The documentation forces clarity about what you are actually doing.

Monitor in production. Bias can drift over time as the population changes, as the environment shifts, or as the model encounters distribution shifts that its training data did not anticipate. Ongoing monitoring is not optional. It is a core system requirement for any model that makes consequential predictions about people.

Include affected communities. The people affected by a model's predictions have information about fairness that is not available in the data. Their lived experience reveals failure modes that quantitative analysis misses. Including their perspective is not a concession to political pressure. It is a source of situational awareness that improves the system's design.

The Sensemaking Challenge

AI fairness is a domain where the sensemaking cliff is particularly steep. The technical complexity is high. The moral stakes are real. The discourse is polarized between people who dismiss all fairness concerns as political correctness and people who demand impossible standards of perfect equity.

Navigating between these positions requires the same skill that all difficult sensemaking problems require: the ability to hold multiple valid perspectives simultaneously without collapsing into one of them prematurely. The model is biased and it is useful. Fairness matters and perfect fairness is mathematically impossible. Historical data reflects real patterns and those patterns include historical injustice.

These statements are all simultaneously true, and anyone who accepts only one side of each pair is operating with an incomplete picture. The thick narrative about AI fairness includes all of these tensions, holds them in productive tension, and makes decisions that are honest about the tradeoffs rather than pretending they do not exist.

Toward Practical Fairness

Practical fairness in AI is not a destination. It is a practice. Like making decisions well, it requires ongoing attention, periodic reassessment, and the humility to acknowledge that your current approach, however carefully designed, will need revision as understanding deepens and conditions change.

The organizations that handle AI fairness best are not the ones with the cleverest debiasing algorithms. They are the ones that have built the institutional capacity to navigate the tradeoffs thoughtfully, document their choices transparently, and update their approach as they learn. The algorithmic compass does not point to a fixed north. It points to the best available direction given what you know now. And the willingness to recalibrate when you learn more is itself the most important fairness practice of all.